Latest Features

Latest features and updates in llms.py

Get the latest features by updating to the latest version:

pip install llms-py --upgradeAfter upgrading, it's recommended to also upgrade any external extensions:

llms --update allMay 9, 2026

Checkout the latest models inc. Grok 4.3, GPT-5.5, GPT Image 2, Opus 4.7, Sonnet 4.6, Gemini 3.1, Gemma 4, DeepSeek v4, Kimi K2.6, GLM 5.1, MiMo V2.5 and more...

Recraft Image Generation Models

New Recraft image generation models from are now available from Open Router:

Recraft V3 ($0.04 per image)

Click to view full size

Recraft V4 ($0.04 per image)

Click to view full size

Recraft V4 Pro ($0.25 per image)

Click to view full size

April 25, 2026

You can now make use of your existing Claude Code Subscription by changing the Anthropic provider npm configuration in your llms.json to use @ai-sdk/anthropic-cli instead of @ai-sdk/anthropic, e.g:

{

"anthropic": {

"enabled": true,

"npm": "@ai-sdk/anthropic-cli",

...

}

}This provider routes all requests to the claude binary so it's functionality and integration is limited, e.g. it doesn't support tool calling or skills. Otherwise it supports other features you'd expect like System Prompts, Chat History, Image and Document attachments, etc.

At the same time it's able to benefit from some smarts in the claude binary it's built-in system prompts and optimizations for handling long conversations, so it can be a good option for some use cases.

Mar 3, 2026

Support for Fireworks Large Language Models

Added support for Fireworks AI as a new provider, a fast inference platform hosting the leading open-source models including GLM 5, Kimi K2.5, MiniMax M2.5 and DeepSeek V3.2 at a market-leading 200 tok/s.

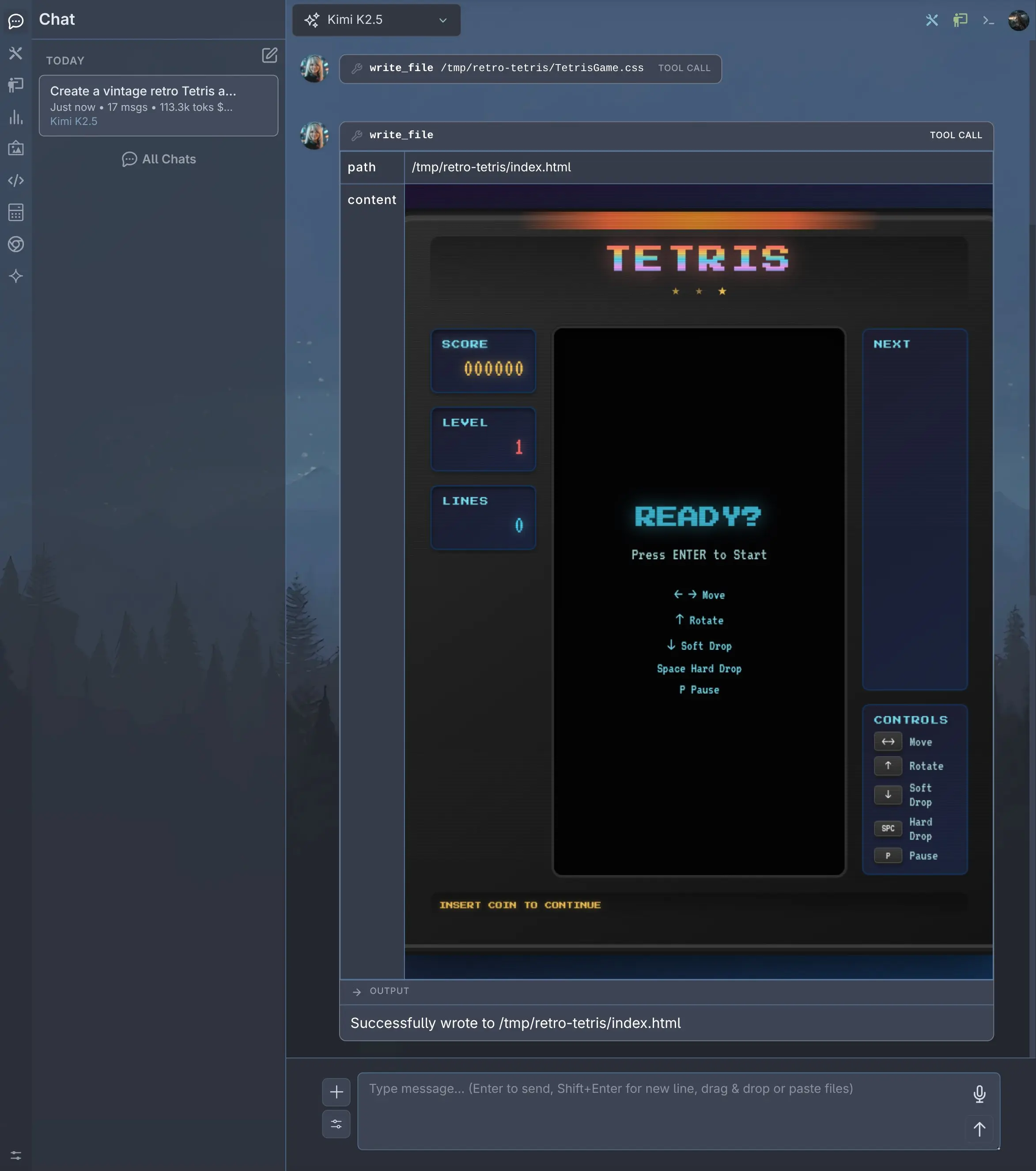

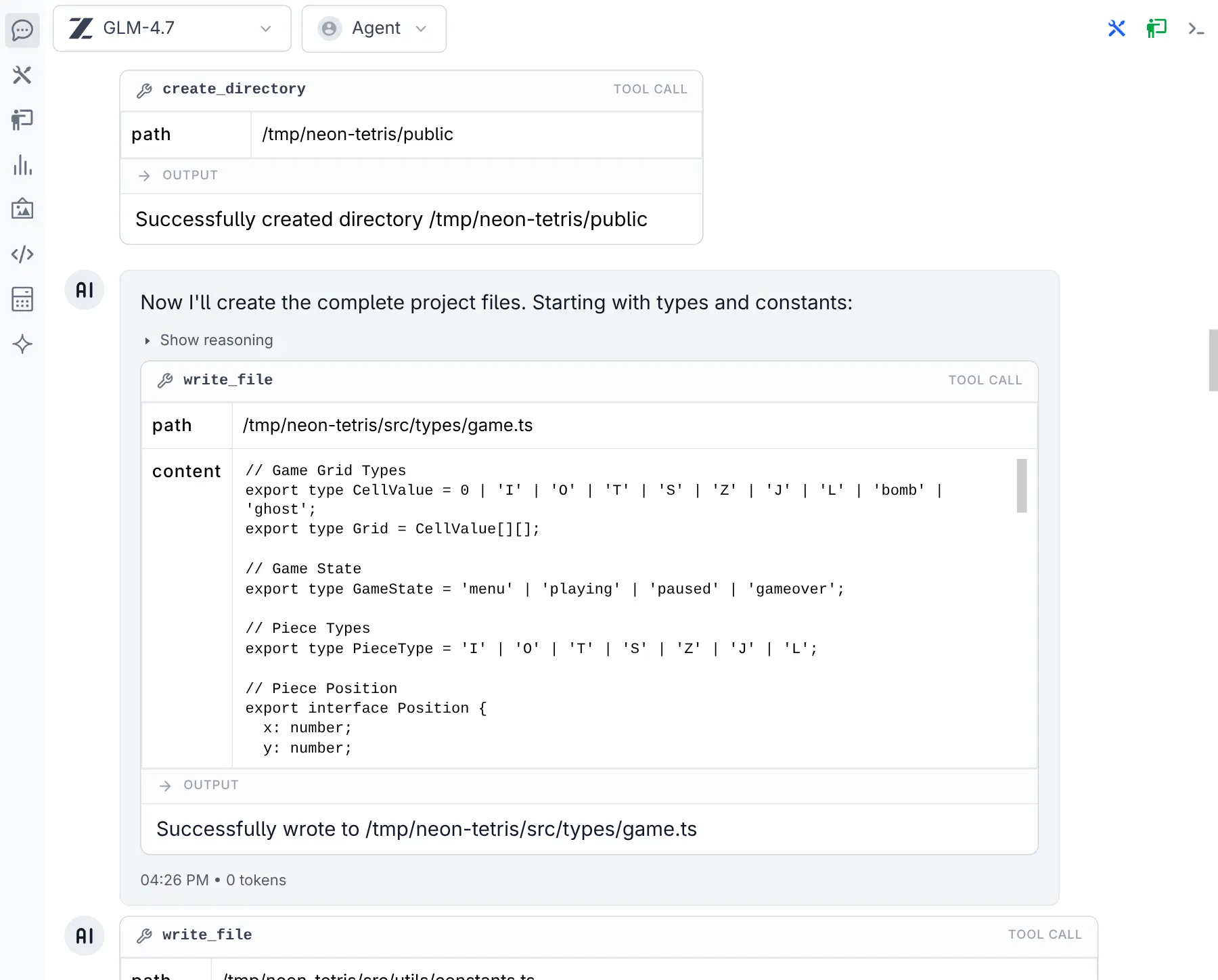

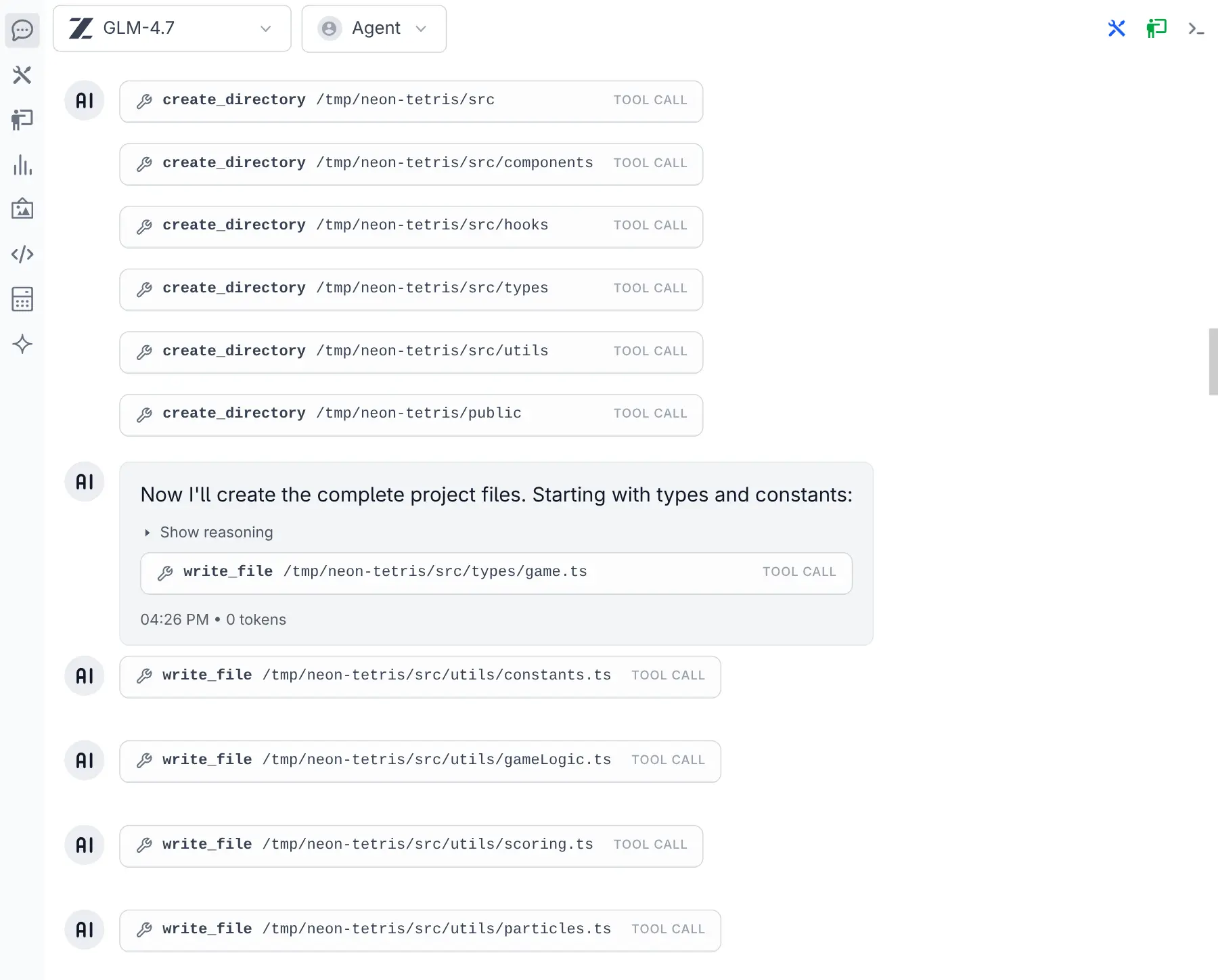

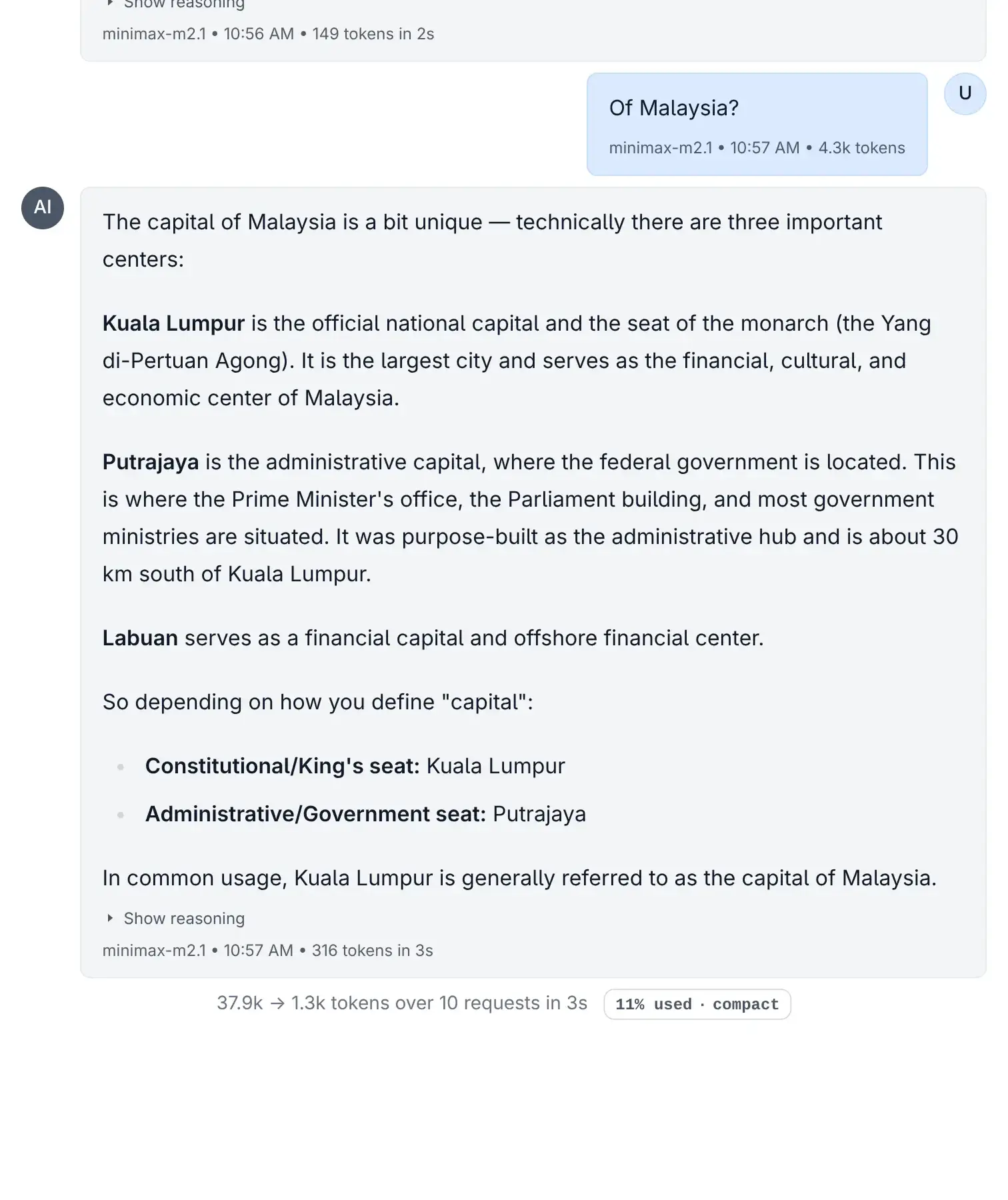

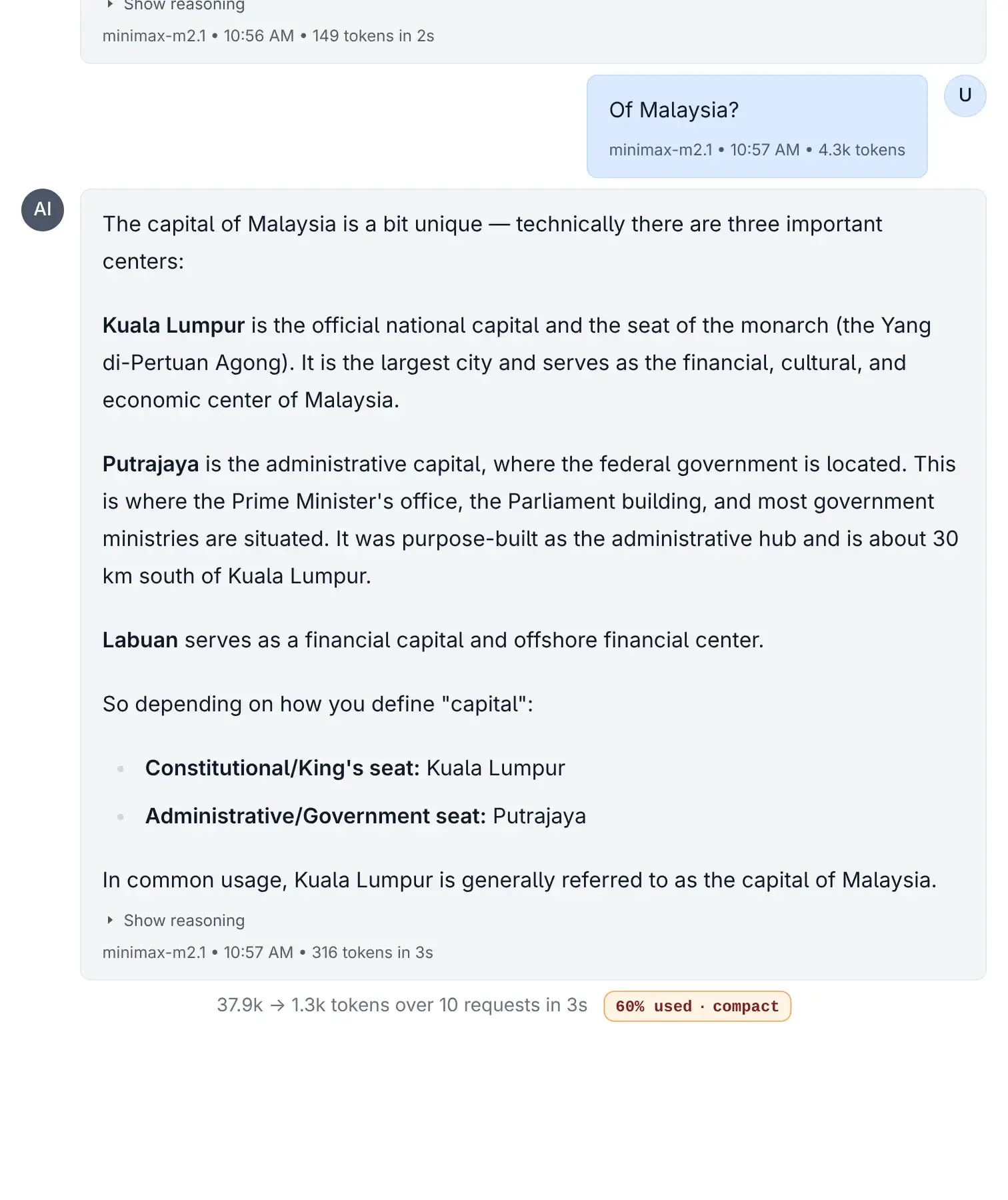

All text models support reasoning and tool use, making Fireworks an excellent choice for agentic workflows where speed matters. Here's Kimi K2.5 via Fireworks creating a retro Tetris game with full tool calling support:

Kimi K2.5 via Fireworks

Click to view full size

Results at 125 tk/s

Click to view full size

Fireworks Image Generation Models

Fireworks also hosts Black Forest Labs' FLUX.1 image generation models with fast inference and competitive per-image pricing:

| Model | Cost | Pricing |

|---|---|---|

| FLUX.1 Kontext Max | $0.08 | per image |

| FLUX.1 Kontext Pro | $0.04 | per image |

| FLUX.1 Dev FP8 | $0.0005 | per step |

| FLUX.1 Schnell FP8 | $0.00035 | per step |

This can be found in the model selector by selecting the Image output filter:

Here are examples to demonstrate the quality of each of the FLUX.1 models:

FLUX.1 Kontext Max

Click to view full size

FLUX.1 Kontext Pro

Click to view full size

FLUX.1 Dev

Click to view full size

FLUX.1 Schnell

Click to view full size

To get started, set your Fireworks API key:

export FIREWORKS_API_KEY=your_api_key_hereThen reset your providers configuration to pick up the new Fireworks models in providers-extra.json:

llms --reset providersMar 2, 2026

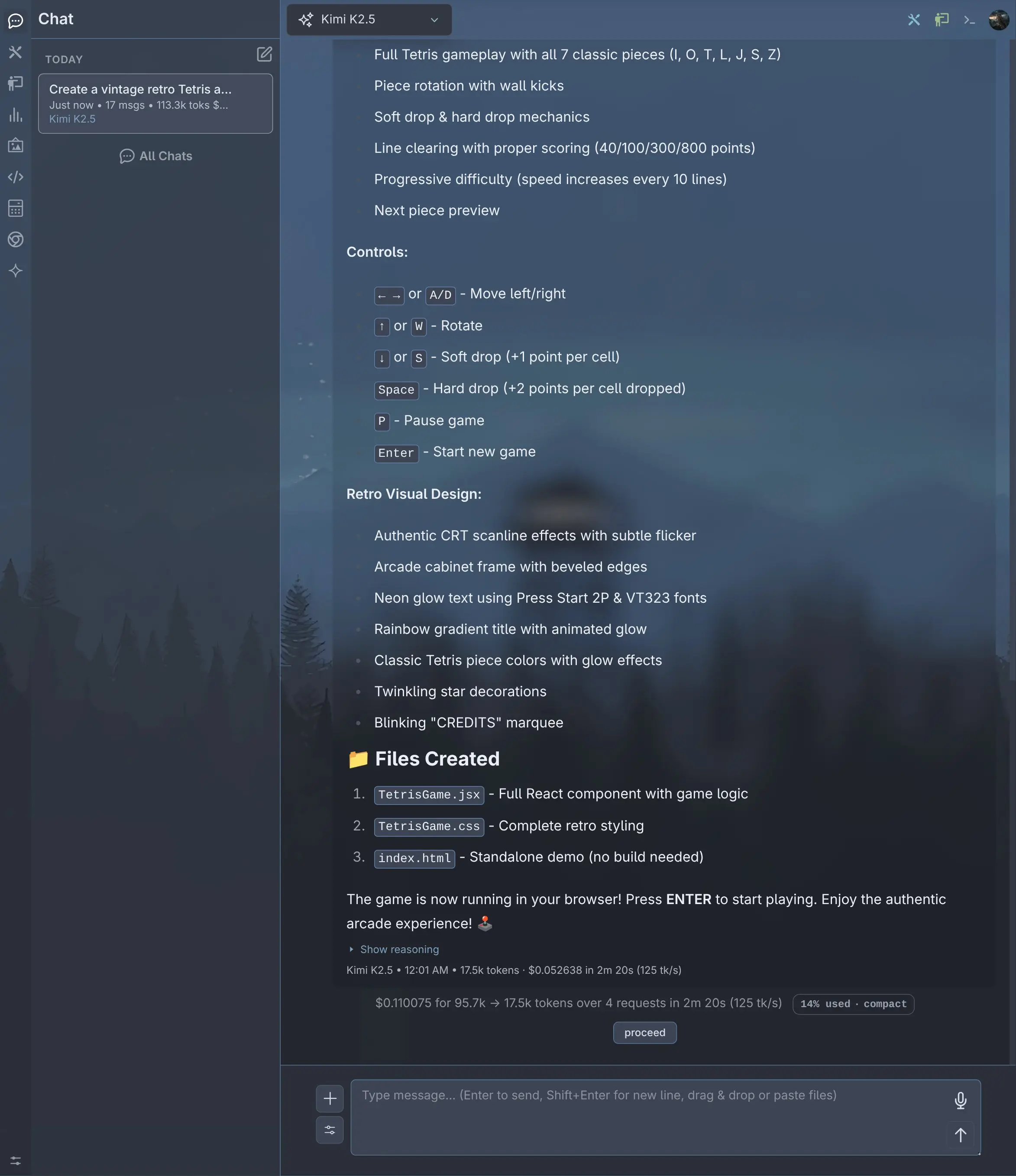

Credentials Auth Provider

The built-in credentials extension enables Username/Password authentication for your Application, including a Sign In page, user registration, role assignment, and account locking. It provides full user management through both the CLI and a web-based Admin UI, along with account self-service for all authenticated users.

Credentials is the default Auth Provider that's automatically enabled when at least one user has been created:

llms --adduser adminAfter logging in as admin, you can create additional users from the Manage Users page which can be accessed from the user menu.

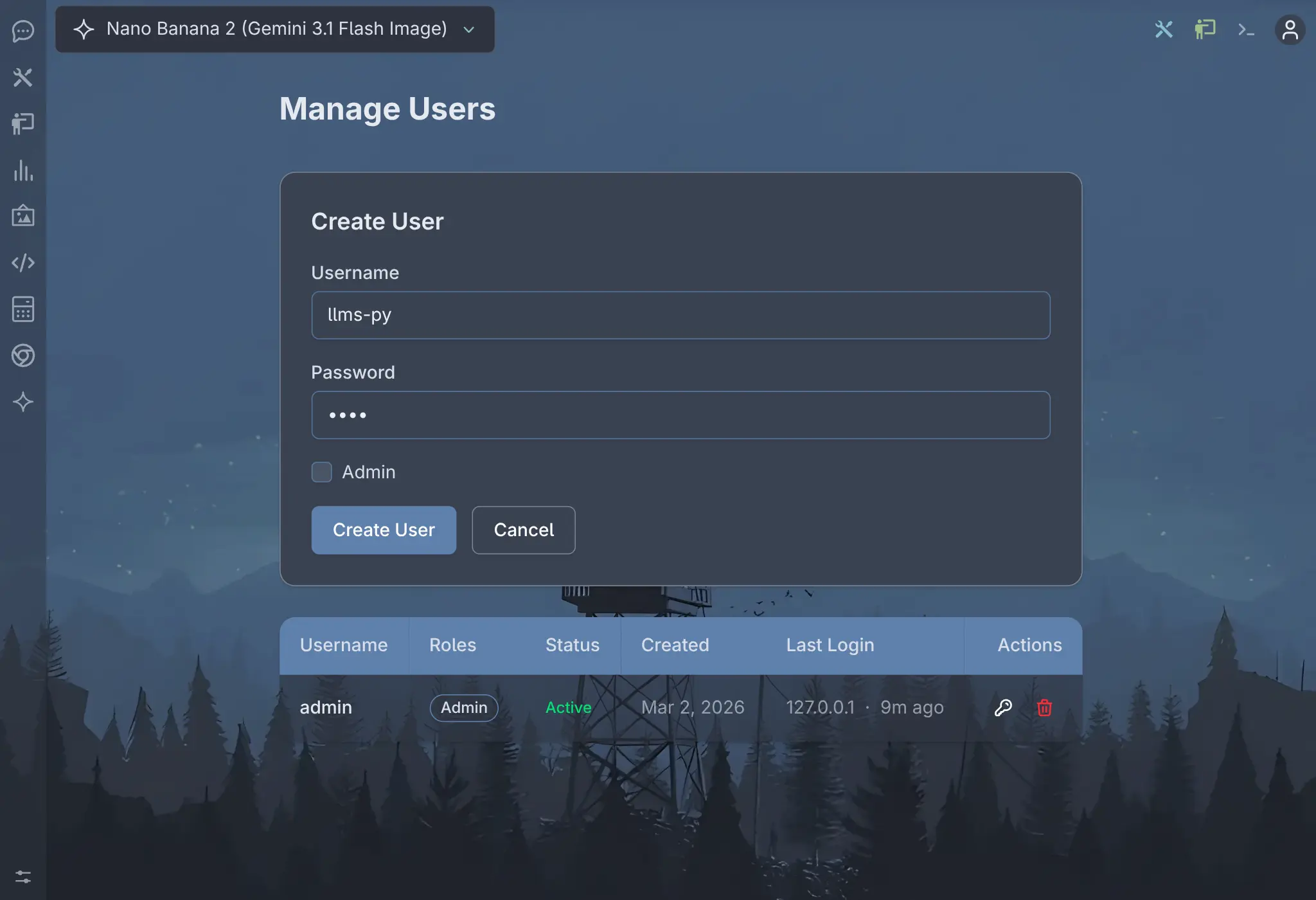

Manage Users

Click to view full size

Create User

Click to view full size

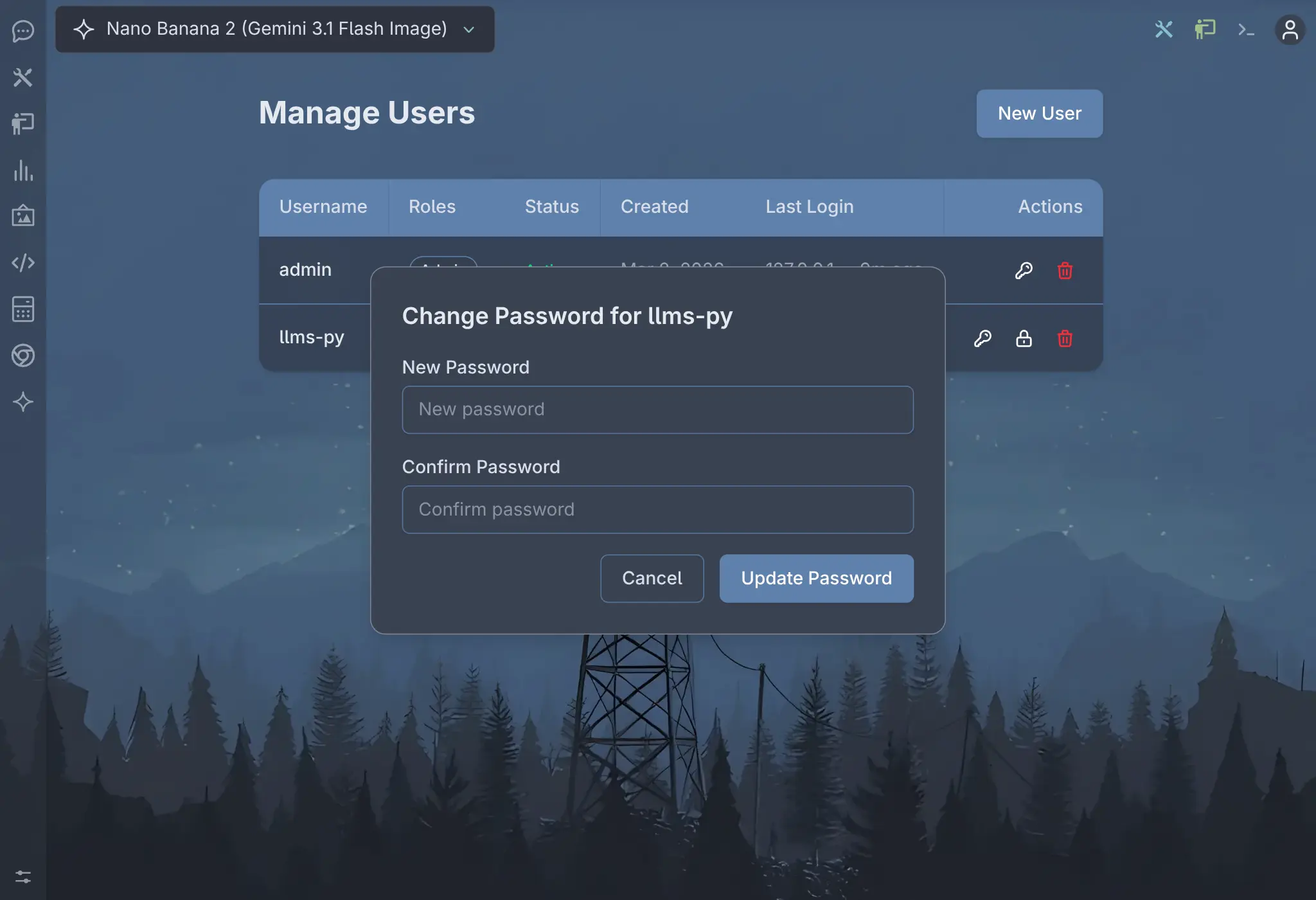

Change Password

Click to view full size

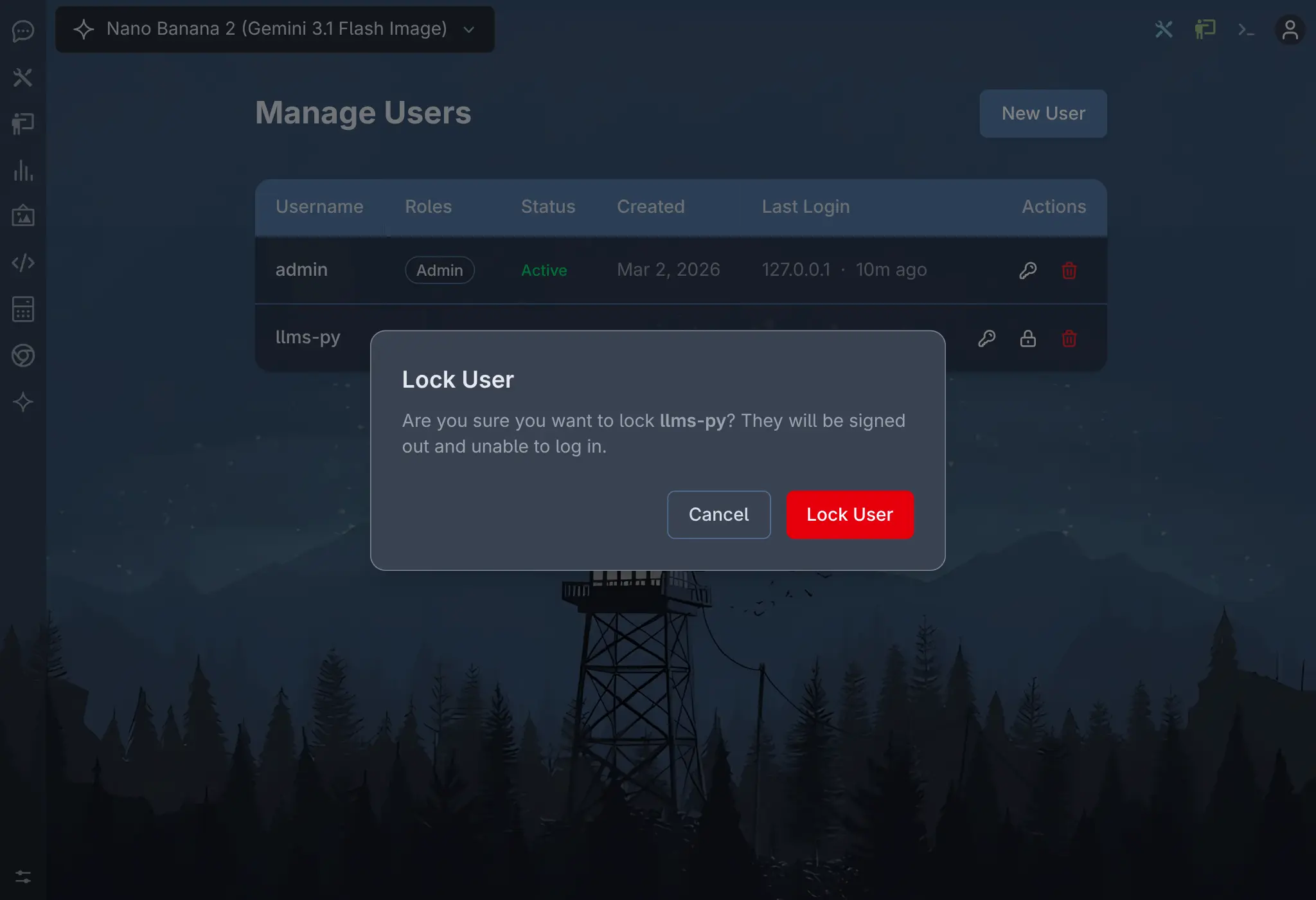

Lock User

Click to view full size

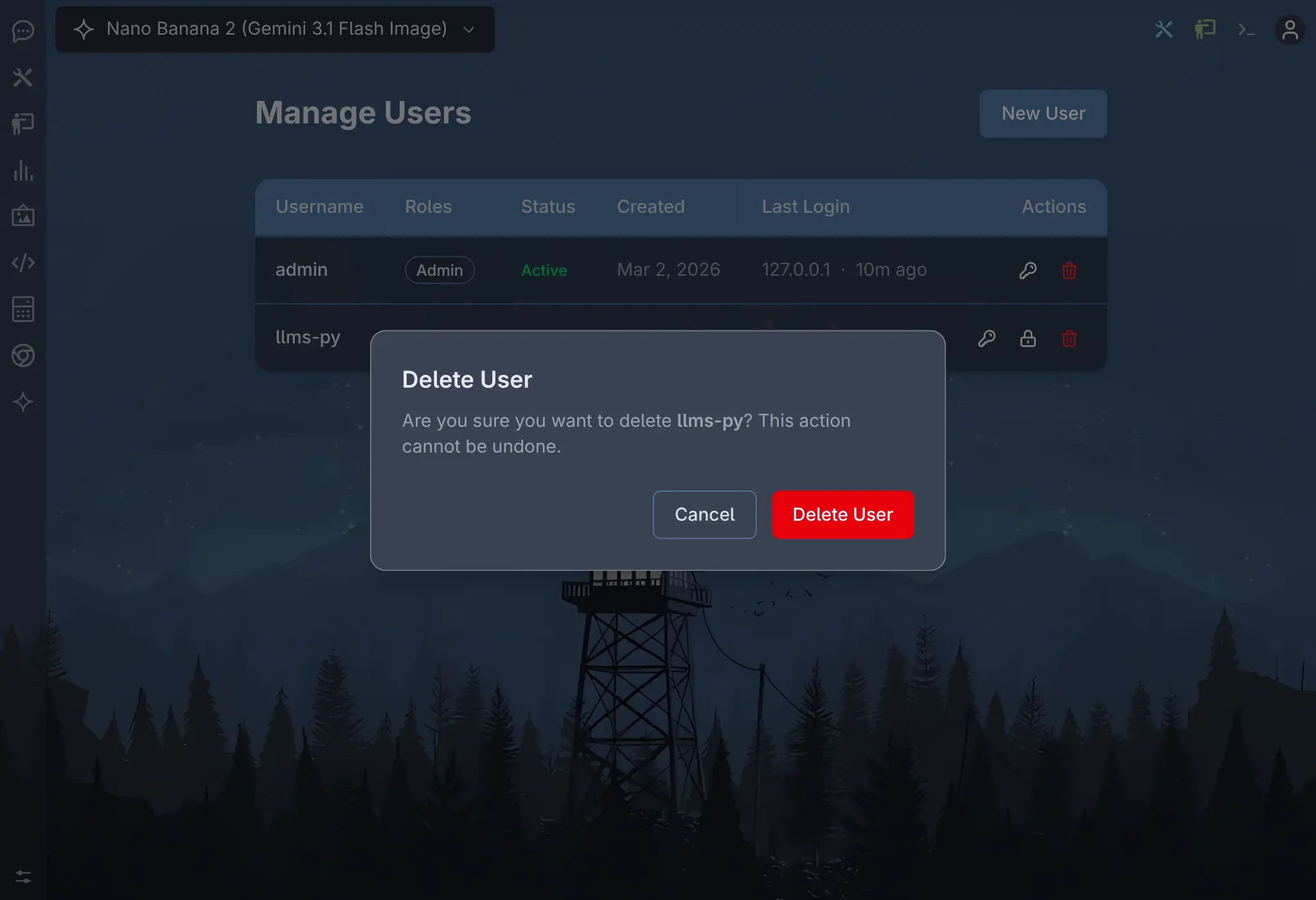

Delete User

Click to view full size

My Account

Click to view full size

See the Credentials Auth docs for more details.

Feb 27, 2026

Nano Banana 2

Added Google's latest Gemini Nano Banana 2 model, available from both the Google and OpenRouter providers. The Google/Gemini provider supports multiple chat history in conversations, while OpenRouter sends a fresh chat request for each message.

You can find it in the model selector by selecting Image output filter and searching for "Nano Banana 2".

To get the latest model info with Nano Banana 2, reset your providers configuration:

llms --reset providersOptimized Gallery Thumbnails

Gallery pages now use optimized thumbnails instead of full-size images. Detail images that increasingly approach over 2MB are now served as thumbnails under 10KB, delivering a noticeable performance improvement when scrolling through the infinite scrolling gallery pages.

Reset to latest configuration

New versions sometimes include changes to llms.json config which isn't automatically updated.

Use the --reset option to reset the default configuration files back to its factory defaults. Available reset options (config, providers, all):

config - Reset ~/.llms/llms.json to default

llms --reset configproviders - Reset ~/.llms/providers.json and ~/.llms/providers-extra.json

llms --reset providersFeb 25, 2026

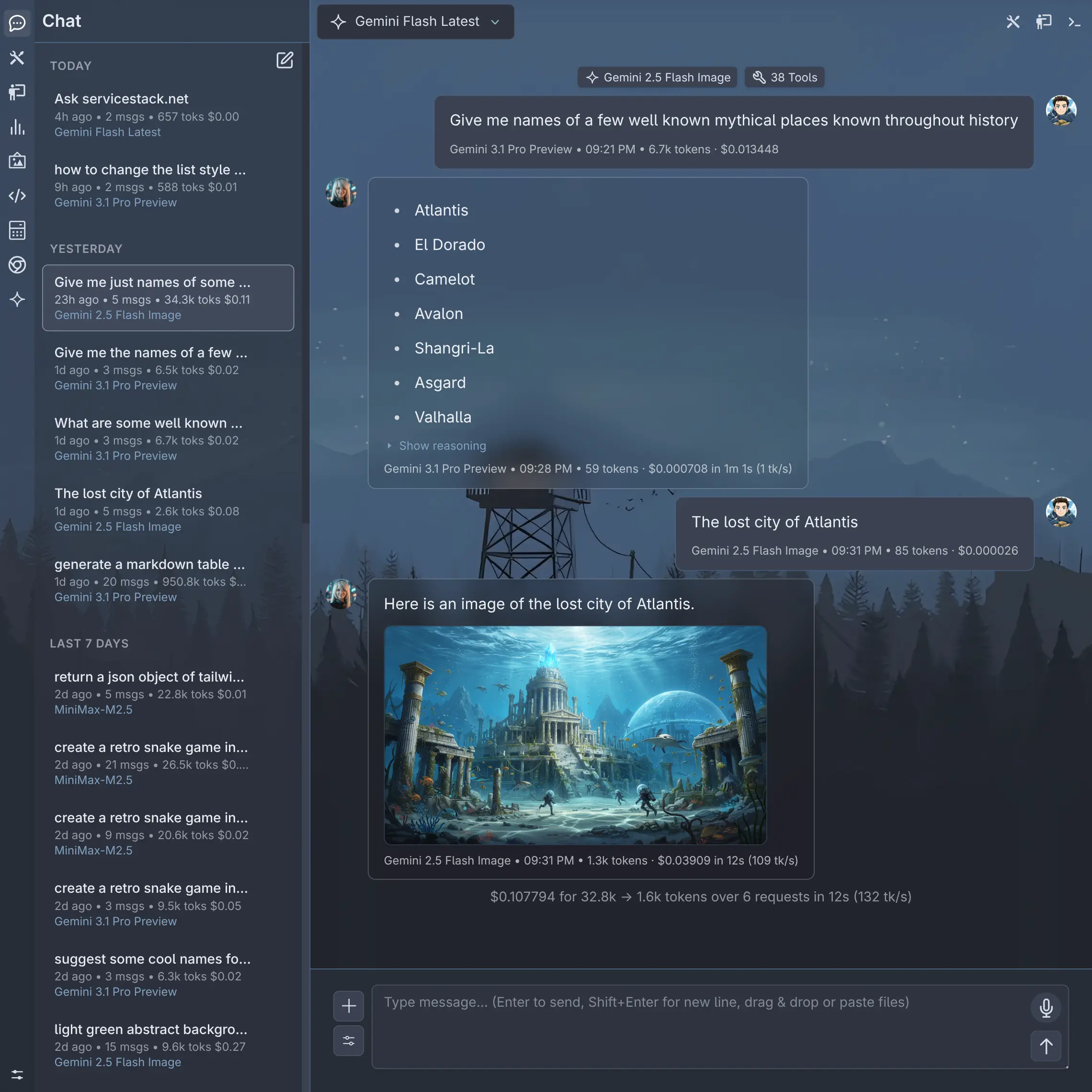

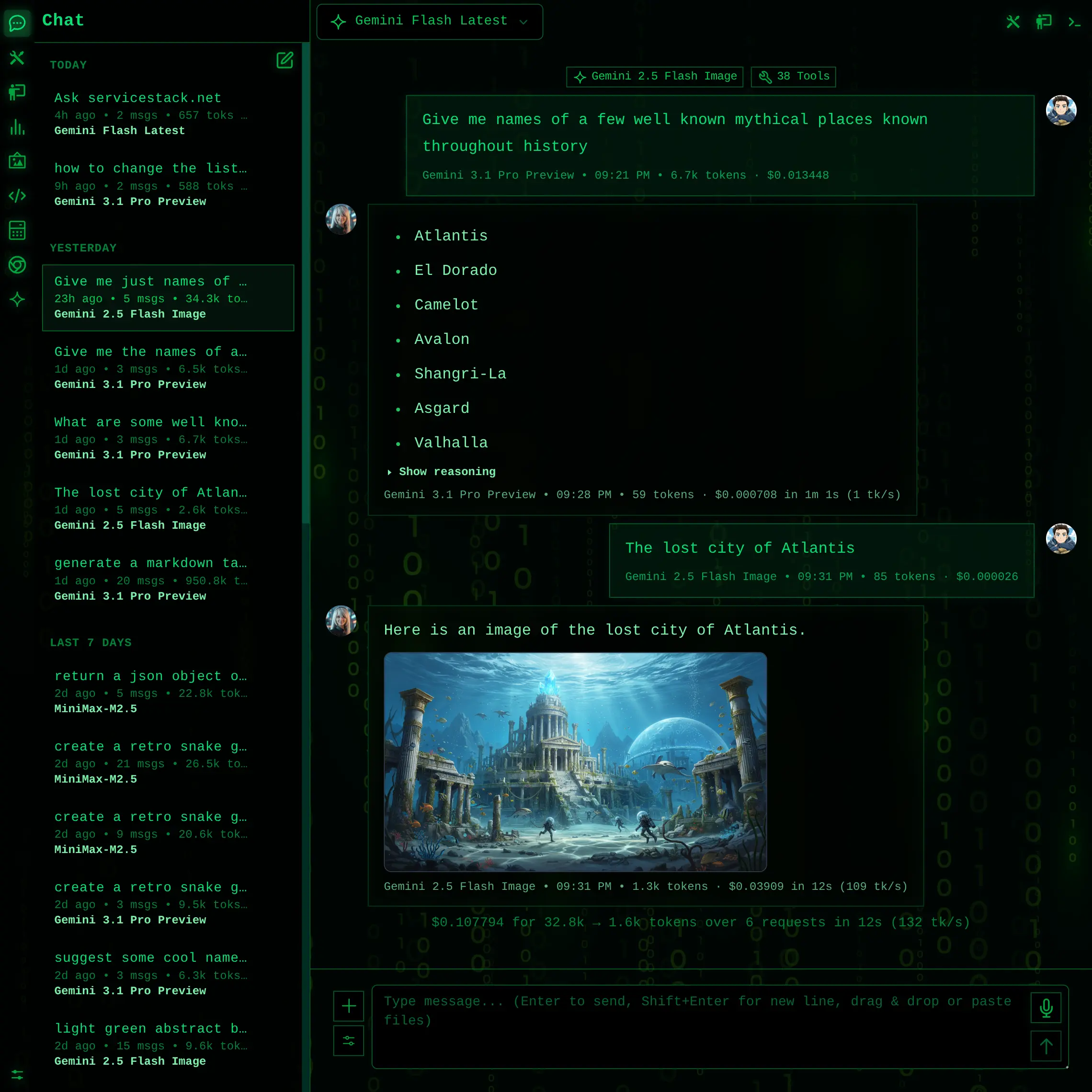

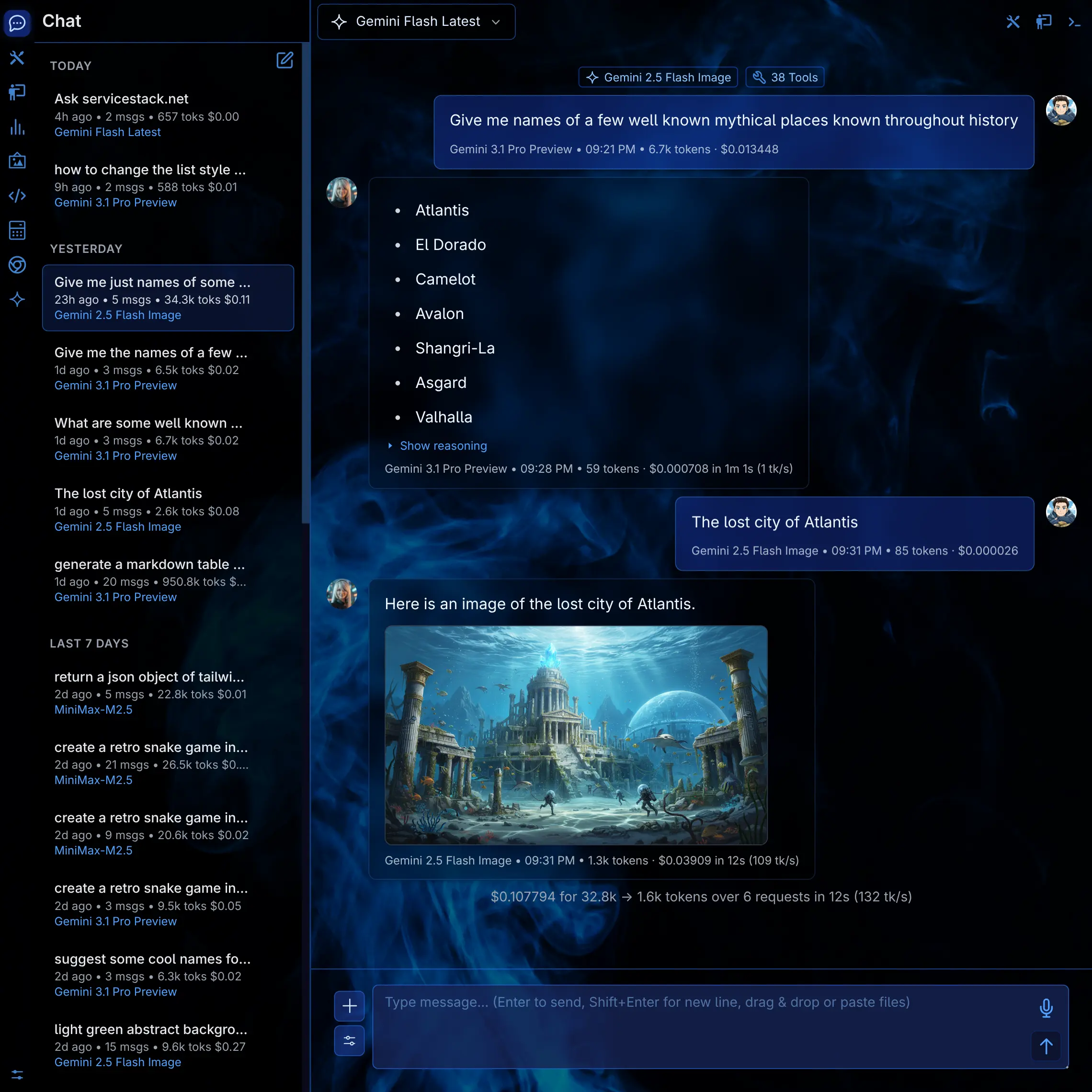

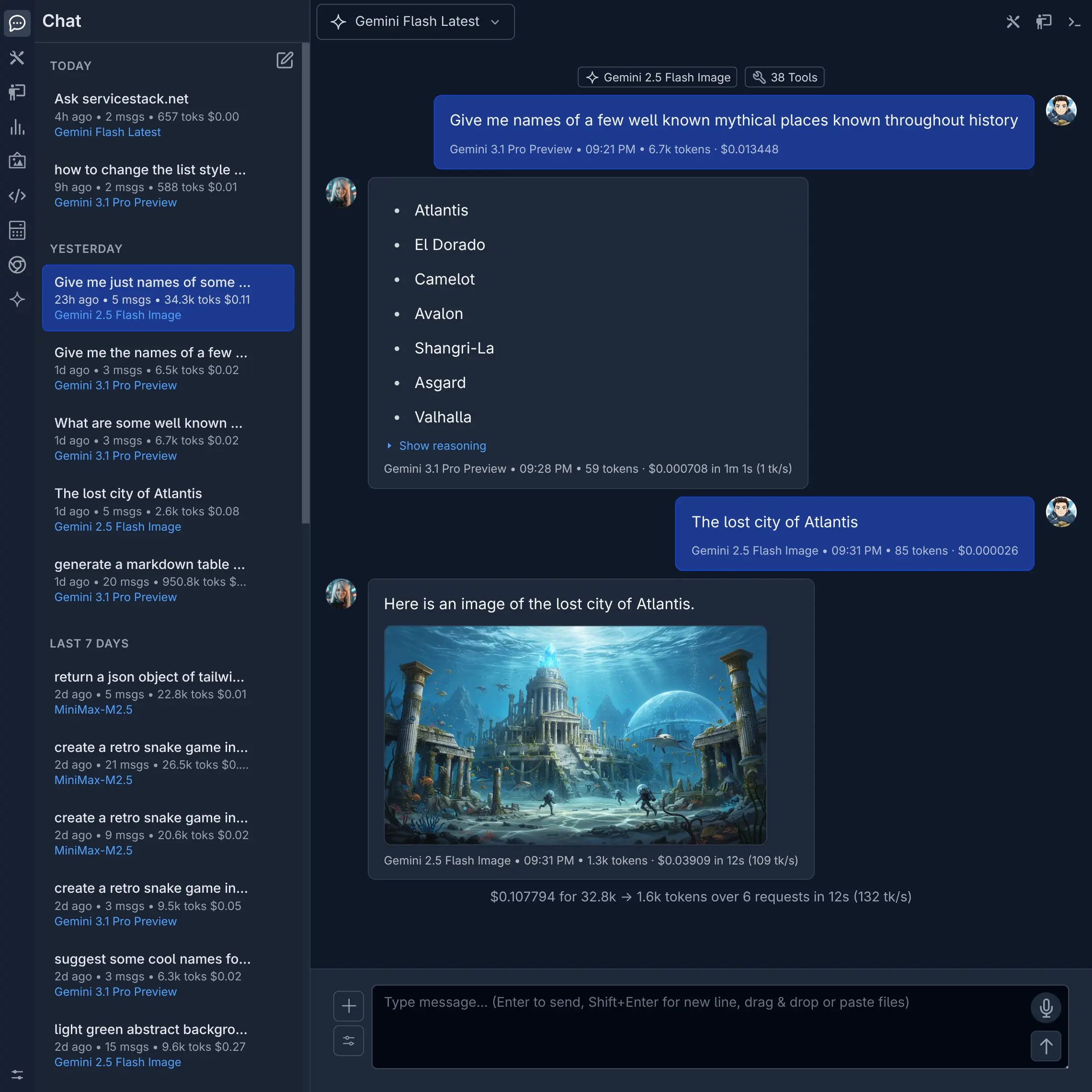

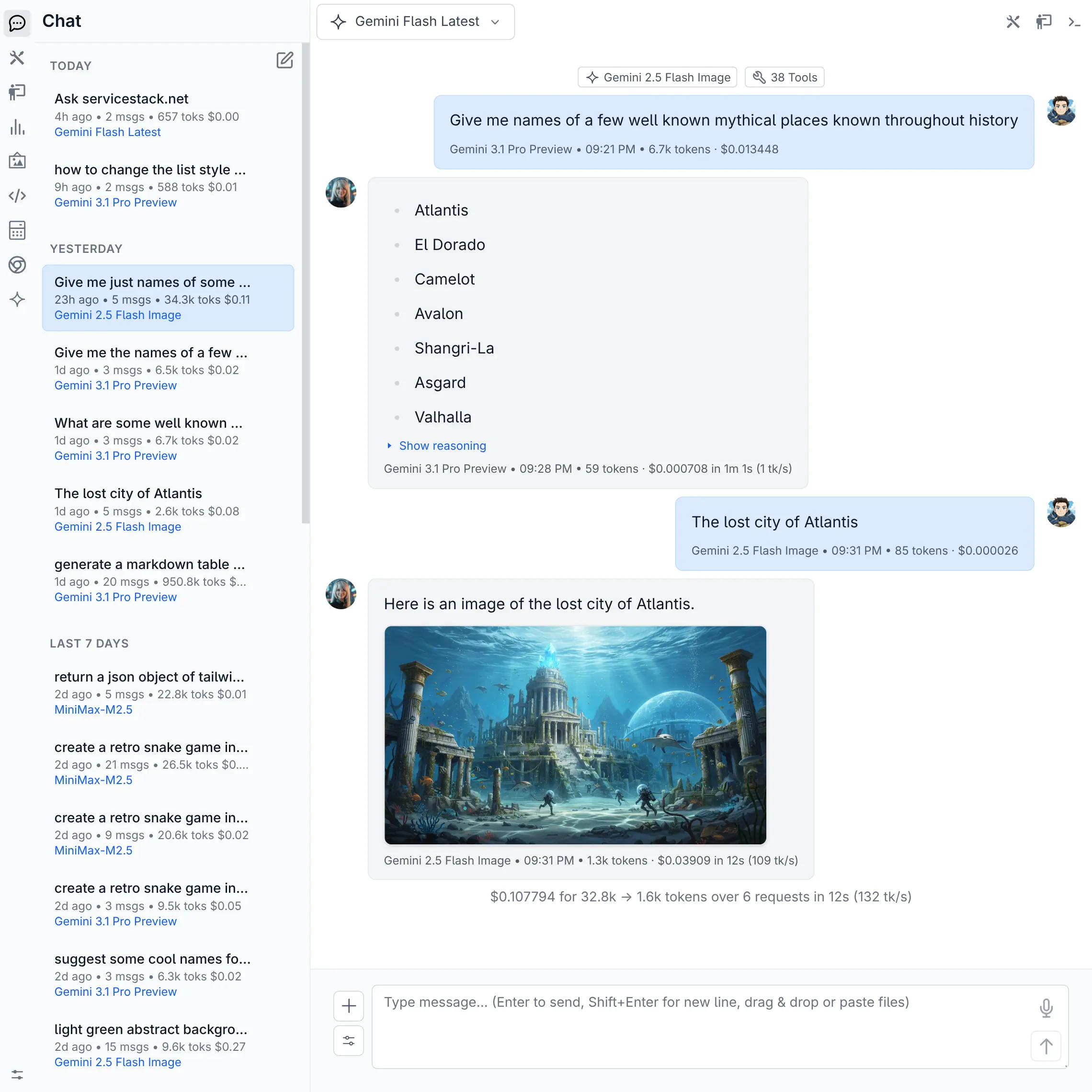

New Theme Support

llms.py now ships with 8 built-in themes - 🎨 4 dark and 🎨 4 light - so you can personalize the Web UI to match your style. Switch themes instantly from the home page or Settings or create your own custom themes with full control over colors, typography, and background assets.

See the Themes docs for details on the available themes and how to create your own.

Feb 19, 2026

Agent Browser Editor

The browser extension provides an integrated environment for creating, editing, and running automated browser scripts powered by Vercel's agent-browser.

- 🖥️ Live Browser Preview - Clickable real-time screenshot with full mouse, keyboard, and scroll interaction

- 📋 Element Inspector - Auto-refreshing snapshot giving scripts and AI a precise map of interactive elements

- ✍️ AI Script Generation - Describe what you want in English and the AI generates the full automation script

- 🤖 AI-Assisted Editing - Select lines in the editor and describe changes to iterate on scripts incrementally

- ▶️ Run Selected Text - Run highlighted portions of a script to test individual steps in isolation

- 💾 Saved Scripts Library - Build a library of reusable browser automations accessible from the sidebar

See browser docs for more details.

Feb 15, 2026

Standard Input

llms now accepts OpenAI-compatible Chat Completion requests via standard input, making it easy to integrate into shell pipelines and scripts.

When JSON is piped in, llms detects it automatically - no extra flags needed:

cat request.json | llmsBuild requests inline with a heredoc:

llms <<EOF

{

"model": "Minimax M2.5",

"messages": [

{ "role": "user", "content": "Capital of France?" }

]

}

EOFCombine with other CLI tools to generate requests dynamically:

echo '{"messages":[{"role":"user","content":"Summarize:'"$(cat notes.txt)"'"}]}' | llmsThis pairs well with structured outputs support and jq to build end-to-end JSON pipelines:

(llms <<EOF

{

"model": "moonshotai/kimi-k2-instruct",

"messages": [{"role":"user", "content":"Return capital cities for: France, Italy, Spain, Japan." }],

"response_format": {

"type": "json_schema",

"json_schema": {

"name": "country_capitals",

"schema": {

"type": "object",

"properties": {

"capitals": {

"type": "array",

"items": {

"type": "object",

"properties": {

"country": { "type": "string" },

"capital": { "type": "string" }

},

"required": ["country","capital"]

}

}

},

"required": ["capitals"]

}

}

}

}

EOF

) | jq -r '.capitals[] | "\(.country): \(.capital)"'Output:

France: Paris

Italy: Rome

Spain: Madrid

Japan: TokyoPersistence Options

By default, all chat completions are saved to the database, including both the chat thread (conversation history) and the individual API request logs. Use these options to control what gets saved to the database:

--nohistory

Skip saving the chat thread (conversation history) to the database. The individual API request log is still recorded.

llms "What is the capital of France?" --nohistory--nostore

Do not save anything to the database - no request log and no chat thread history. Implies --nohistory.

llms "What is the capital of France?" --nostoreFeb 9, 2026

Custom User and Agent Avatars

Personalize your chat experience with custom avatars for both yourself and AI agents. Upload images via the Settings page or manually add them to ~/.llms/users/ - supports .png, .svg, and auto-converts from other formats.

User Avatar

Click to view full size

Agent Avatar

Click to view full size

Compact Tool Calls with Smart Summarization

The new Compact Tool Calls feature automatically summarizes long tool arguments and outputs in the Chat UI to keep conversations concise while still providing access to important information as needed.

Tools Expanded

Click to view full size

Tools Collapsed

Click to view full size

Previously, even with long Tool Call Arguments minimized, you could still only see a few on a page. Now that they're collapsed by default, you can see more at a glance and expand only the ones you need.

Feb 8, 2026

Support for Voice Input

Added Voice Input extension with speech-to-text transcription via a microphone button or ALT+D shortcut, supporting three modes: local transcription with voxtype, custom transcribe executable, and cloud-based voxtral-mini-latest via Mistral.

- Added tok/s metrics in Chat UI on a per-message and per-thread basis

Feb 5, 2026

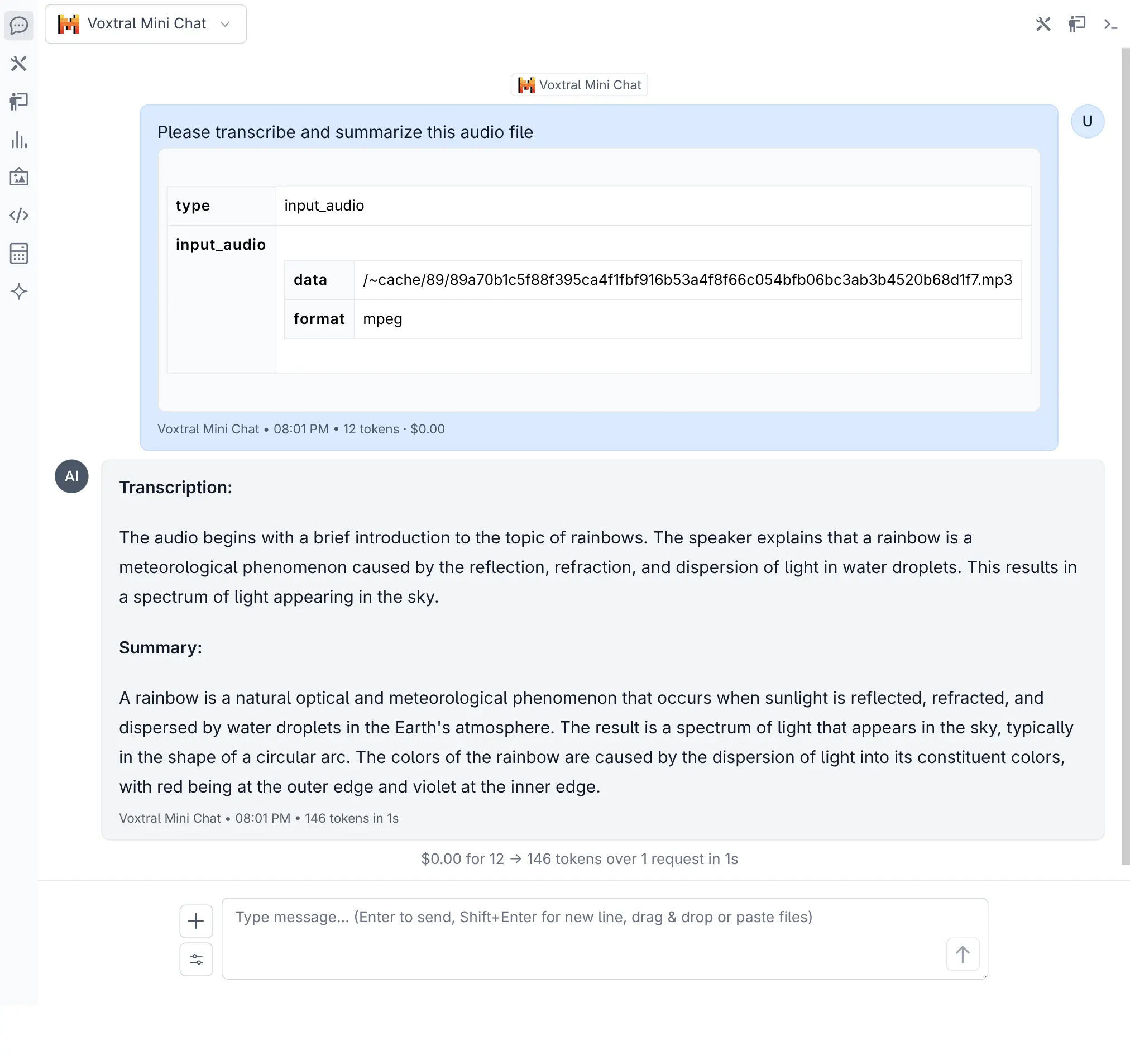

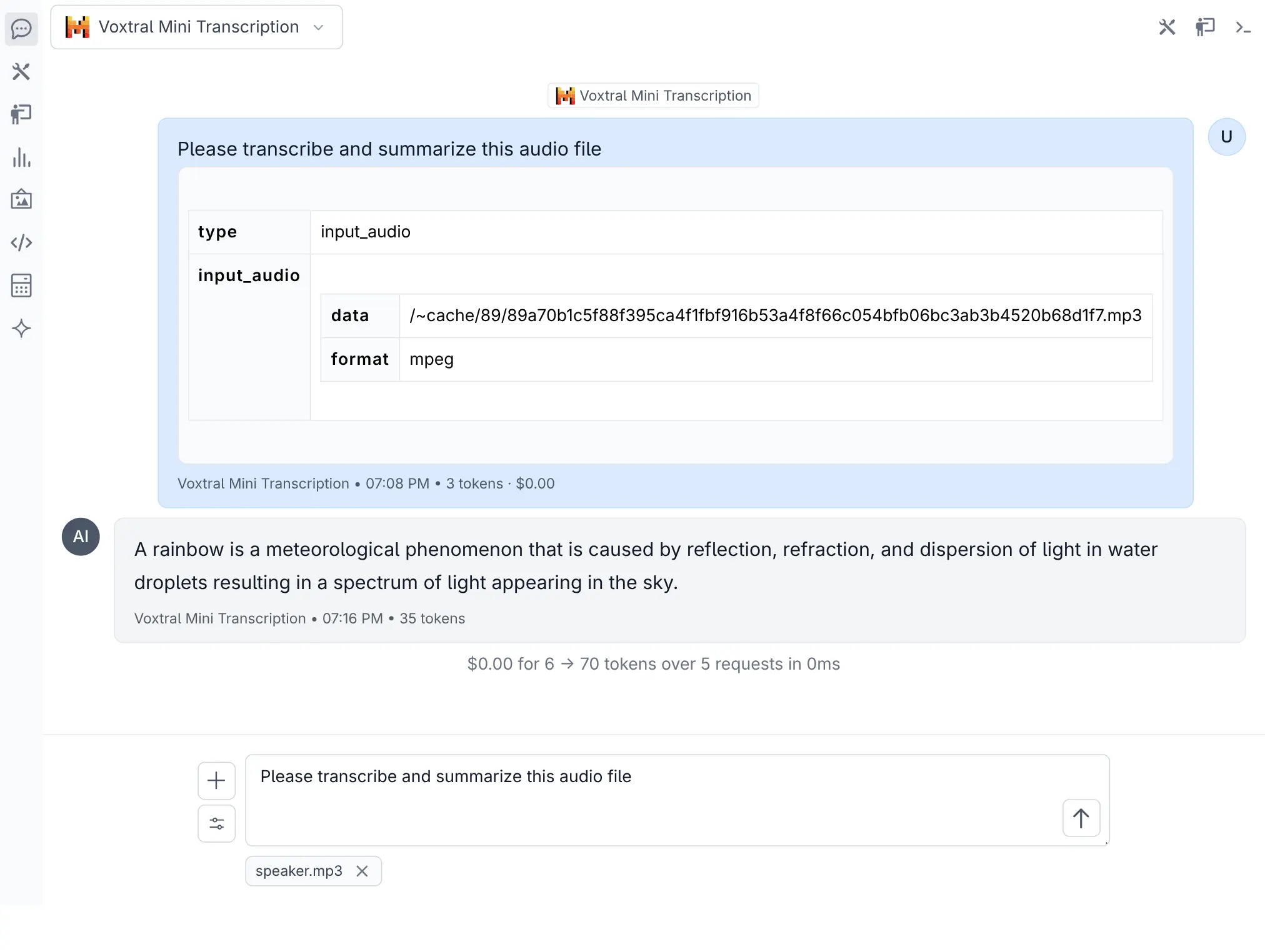

Voxtral Audio Models

Added support for Mistral's Voxtral audio transcription models - use the audio input filter in the model selector to find them.

Both the Chat Completion and dedicated Audio Transcription APIs deliver impressive speed, with the dedicated transcription endpoint returning results near-instantly.

Voxtral Chat

Click to view full size

Voxtral Audio Transcription

Click to view full size

Compact Threads

Added Compact Threads feature for managing long conversations - it summarizes the current thread into a new, condensed thread targeting 30% of the original context size. The compact button appears when a conversation exceeds 10 messages or uses more than 40% of the model's context limit.

Compact Button

Click to view full size

Compact Button Intensity

Click to view full size

The compaction model and prompts are fully customizable in ~/.llms/llms.json.

- Fix OpenRouter provider after models.dev switched to use

@openrouter/ai-sdk-provider. Removellms.jsonto reset to default configuration:

rm ~/.llms/llms.jsonFeb 3, 2026

-

Removed duplicate filesystem tools from Core Tools, they're now only included in File System Tools

-

Add

sort_byandmax_resultoptions insearch_filesand madepathand optional parameter to improve utility and reduce tool use error rates.pathnow defaults to the first allowed directory (project dir).

Feb 3, 2026

- Add support for overridable ClientTimeout limits in

~/.llms/llms.json:

{

"limits": {

"client_timeout": 120

}

}- Show proceed button for assistant messages without content but with reasoning

Feb 2, 2026

Multi User Skills

When Auth is enabled, each user manages their own skill collection at ~/.llms/user/<user>/skills and can enable or disable skills independently. Shared global & project-level skills remain accessible but read-only.

Jan 31, 2026

- Refactor GitHub Auth out into a builtin github_auth extension

Jan 30, 2026

-

Support for tool calling for models returned by local Ollama instances

-

New

openai-localprovider for custom OpenAI-compatible endpoints -

Fix computer tool issues in Docker by only loading computer tool if run in environment with a display

Jan 29, 2026

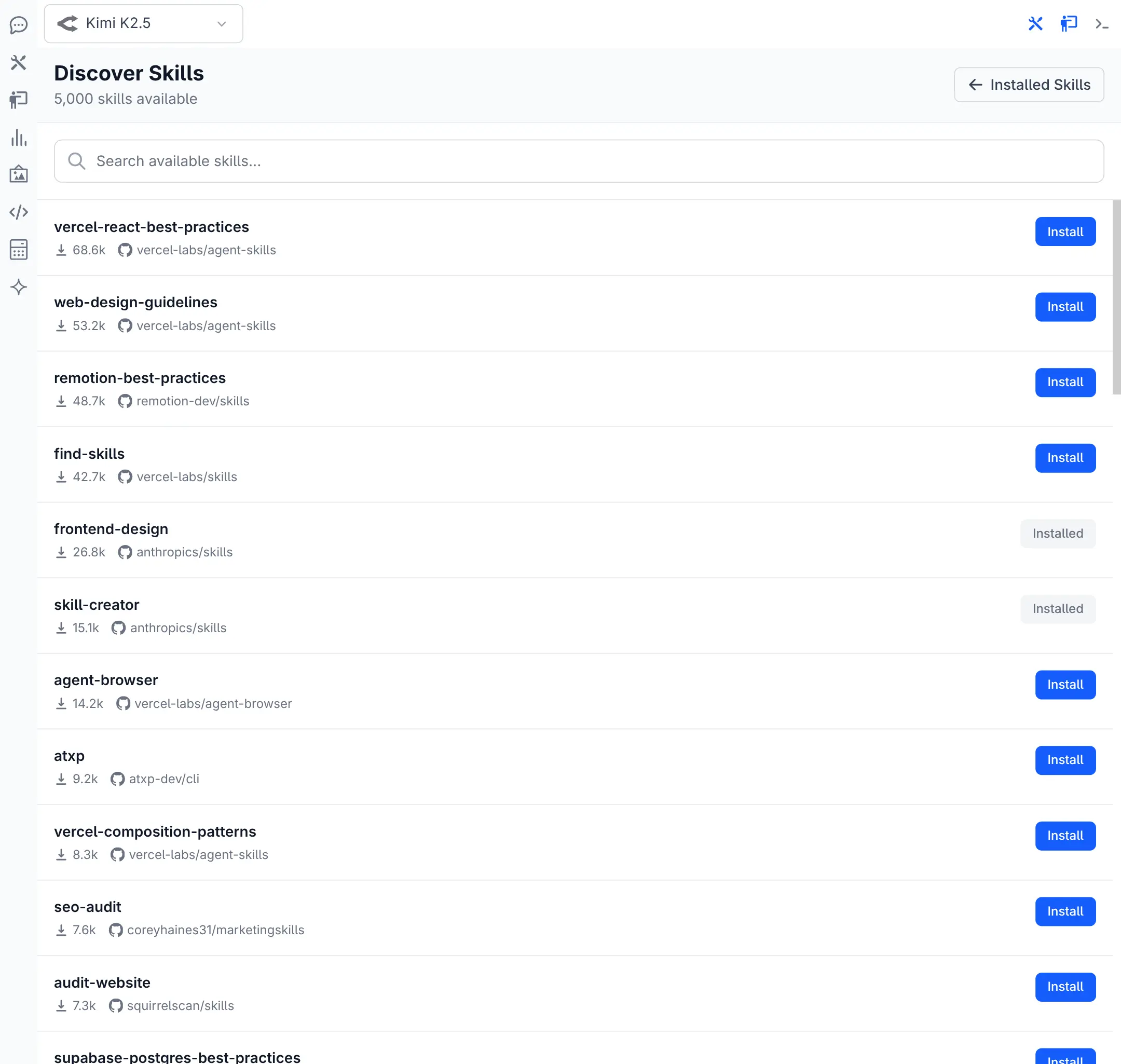

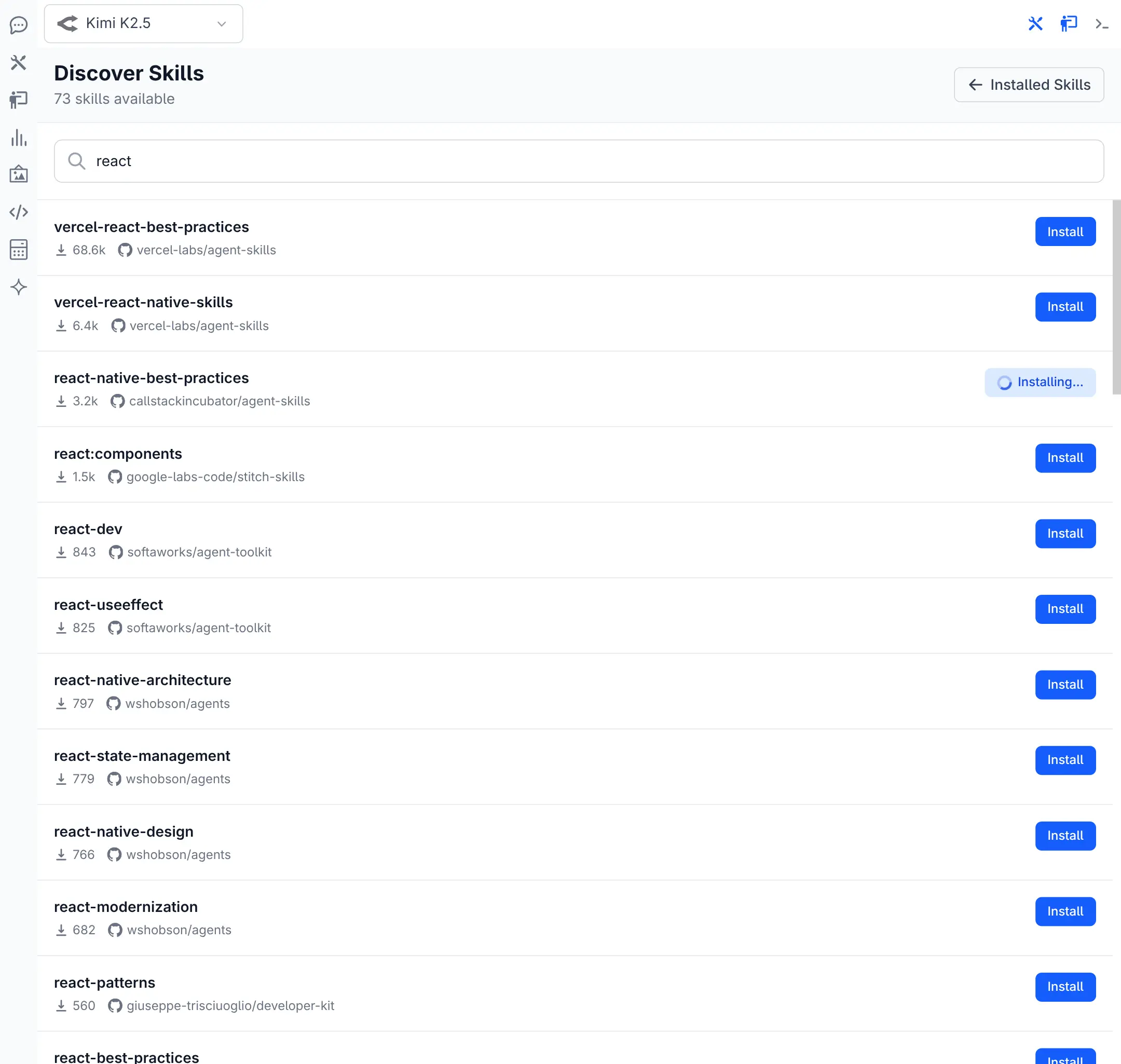

Skills Management

Added a full Skills Management UI for creating, editing, and deleting skills directly from the browser.

Skills package domain-specific instructions, scripts, references & assets that enhance your AI agent.

Browse & Install Skills

Added a Skill Browser with access to the top 5,000 community skills from skills.sh. Search, browse, and install pre-built skills directly into your personal collection.

Browse Skills

Click to view full size

Installing Skill

Click to view full size

Jan 28, 2026

-

Use a barebones fallback markdown render when markdown renders like KaTex fail

-

Use

sanitizeHtmlto avoid breaking layout when displaying rendered html

Jan 26, 2026

-

Add copy button to TextViewer popover menu

-

Add proceed and retry buttons at the bottom of Threads to continue agent loop

-

Add filesystem tools in computer extension

-

Add a simple

sendUserMessageAPI in UI to simulate a new user message on the thread -

Implement

TextViewercomponent for displaying Tool Args, Tool Output + SystemPrompt

Jan 24, 2026

- Auto collapse long tool args content and add ability to min/maximize text content

Jan 23, 2026

- Add built-in computer_use extension

v3 Released

See v3 release notes for details on the major new features and improvements in v3.